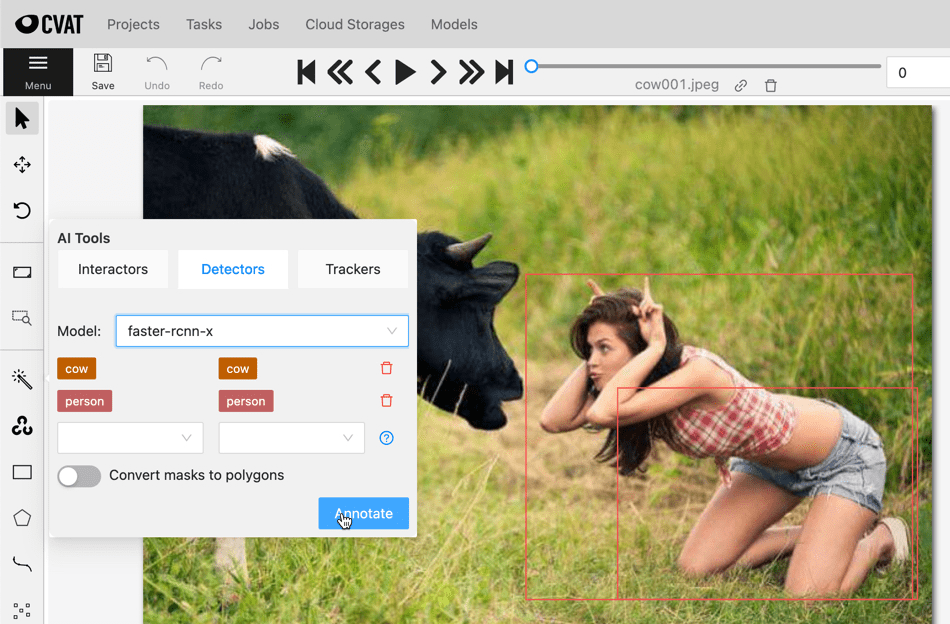

Nuclio 모델배포 : mmdetect

Main.py

main.py를 만들기 위해 참고할 inference코드

inference demo

main.py의 handler작성을 위해 inference demo확인

– init_detector

– inference_detector

import warnings

from pathlib import Path

import numpy as np

import torch

import mmcv

from mmcv.ops import RoIPool

from mmcv.parallel import collate, scatter

from mmcv.runner import load_checkpoint

from mmcv.runner import load_checkpoint

from mmdet.core import get_classes

from mmdet.datasets import replace_ImageToTensor

from mmdet.datasets.pipelines import Compose

from mmdet.models import build_detector

from mmdet.apis import init_detector

from mmdet.apis import inference_detector

from mmdet.apis import show_result_pyplot

import os

INPUT_IMG = "./imgs/demo.jpg"

OUTPUT_FILE = "./imgs/demo_out.jpg"

CONFIG = [os.path.join('./param', filenm) for filenm in os.listdir('./param') if filenm.endswith('.py')][0]

CHKPNT = [os.path.join('./param', filenm) for filenm in os.listdir('./param') if filenm.endswith('.pth')][0]

# ROOT = "/home/oschung_skcc/my/git/mmdetection/"

# CONFIG = os.path.join(ROOT, "sixxconfigs/faster_rcnn_r50_fpn_1x_coco_001.py")

# CHECKPOINT = os.path.join(ROOT, "checkpoints/faster_rcnn_r50_fpn_1x_coco_20200130-047c8118.pth")

SCORE_THRESHOLD = 0.9

DEVICE = "cuda:6"

PALETTE = "coco" # choices=['coco', 'voc', 'citys', 'random']

# def parse_args():

# parser = ArgumentParser()

# parser.add_argument('img', help='Image file')

# parser.add_argument('config', help='Config file')

# parser.add_argument('checkpoint', help='Checkpoint file')

# parser.add_argument('--out-file', default=None, help='Path to output file')

# parser.add_argument('--device', default='cuda:0', help='Device used for inference')

# parser.add_argument('--palette', default='coco', choices=['coco', 'voc', 'citys', 'random'], help='Color palette used for visualization')

# parser.add_argument('--score-thr', type=float, default=0.3, help='bbox score threshold')

# parser.add_argument('--async-test', action='store_true', help='whether to set async options for async inference.')

# args = parser.parse_args()

# return args

import yaml

FYAML = "./function.yaml"

with open(FYAML, 'rb') as function_file:

functionconfig = yaml.safe_load(function_file)

labels_spec = functionconfig['metadata']['annotations']['spec']

classes = eval(labels_spec)

# classes = [

# { "id": 1, "name": "person" },

# { "id": 2, "name": "bicycle" },

# { "id": 3, "name": "car" },

# ...

# { "id":88, "name": "teddy_bear" },

# { "id":89, "name": "hair_drier" },

# { "id":90, "name": "toothbrush" }

# ]

if __name__ == '__main__':

# build the MODEL from a config file & a checkpoint file

model = init_detector(CONFIG, CHKPNT, device=DEVICE)

# test a single image

result = inference_detector(model, INPUT_IMG)

# show the results

show_result_pyplot(model, INPUT_IMG, result,

palette = PALETTE,

score_thr = SCORE_THRESHOLD,

)

#-----------------------------------------------------------------

img = mmcv.imread(INPUT_IMG)

if isinstance(result, tuple):

bbox_result, segm_result = result

if isinstance(segm_result, tuple):

segm_result = segm_result[0] # discard the `dim`

else:

bbox_result, segm_result = result, None

img = img.copy()

bboxes = np.vstack(bbox_result)

labels = [

np.full(bbox.shape[0], i, dtype=np.int32)

for i, bbox in enumerate(bbox_result)

]

labels = np.concatenate(labels)

scores = bboxes[:, -1]

inds = scores > SCORE_THRESHOLD

bboxes = bboxes[inds, :] # points

labels = labels[inds] # label

scores = scores[inds] # confidence

encoded_results = []

if bboxes.shape[0] > 0:

for i in range(bboxes.shape[0]):

encoded_results.append({

'confidence': scores[i],

'label': classes[labels[i]]['name'],

'points': bboxes[i].tolist(),

'type': 'rectangle'

})

print(encoded_results)

2. 로컬에서 DL모델을 실행하기 위한 소스코드를 Nuclio 플랫폼에 적용

2-1 모델을 메모리에 로딩 (init_context(context)함수를 사용하여)

2-2 아래 프로세스를 위해 handler에 entry point를 정의하고, main.py에 넣는다.

- accept incoming HTTP requests

- run inference

- reply with detection results

# Copyright onesixx. All rights reserved.

CONFIG = '/opt/nuclio/param/faster_rcnn_r50_fpn_1x_coco_001.py'

CHKPNT = '/opt/nuclio/param/faster_rcnn_r50_fpn_1x_coco_20200130-047c8118.pth'

FYAML = "/opt/nuclio/function.yaml"

SCORE_THRESHOLD = 0.9

DEVICE = "cpu" #"cuda:6"

PALETTE = "coco"

import os

import mmcv

from mmdet.apis import init_detector

from mmdet.apis import inference_detector

import io

import base64

from PIL import Image

import numpy as np

import yaml

import json

#from model_loader import ModelLoader

# For TEST

# CONFIG = [os.path.join("./param", filenm) for filenm in os.listdir('./param') if filenm.endswith('.py')][0]

# CHKPNT = [os.path.join("./param", filenm) for filenm in os.listdir('./param') if filenm.endswith('.pth')][0]

# FYAML = "./function.yaml"

with open(FYAML, 'rb') as function_file:

functionconfig = yaml.safe_load(function_file)

labels_spec = functionconfig['metadata']['annotations']['spec']

classes = eval(labels_spec)

# classes = [ { "id": 1, "name": "person" },...]

def init_context(context):

context.logger.info("Init context... 0%") # --------------------------------

model_handler = init_detector(CONFIG, CHKPNT, device=DEVICE)

#model_handler = ModelLoader(classes)

context.user_data.model = model_handler

context.logger.info("Init context... 100%") # ------------------------------

def handler(context, event):

context.logger.info("Run sixx model")

data = event.body

buf = io.BytesIO(base64.b64decode(data["image"]))

# threshold = float(data.get("threshold", SCORE_THRESHOLD))

# context.user_data.model.conf = threshold

# buf = './imgs/demo.jpg'

image = Image.open(buf)

imgArray = np.array(image)

# result = inference_detector(model_handler, imgArray)

result = inference_detector(context.user_data.model, imgArray)

if isinstance(result, tuple):

bbox_result, segm_result = result

if isinstance(segm_result, tuple):

segm_result = segm_result[0] # discard the `dim`

else:

bbox_result, segm_result = result, None

img = image.copy()

bboxes = np.vstack(bbox_result)

labels = [

np.full(bbox.shape[0], i, dtype=np.int32)

for i, bbox in enumerate(bbox_result)

]

labels = np.concatenate(labels)

scores = bboxes[:, -1]

inds = scores > SCORE_THRESHOLD

scores = scores[inds] # confidence

labels = labels[inds] # label

bboxes = bboxes[inds, :] # points

# if show_mask and segm_result is not None:

# segms = mmcv.concat_list(segm_result)

# segms = [segms[i] for i in np.where(inds)[0]]

# if palette is None:

# palette = color_val_iter()

# colors = [next(palette) for _ in range(len(segms))]

encoded_results = []

if bboxes.shape[0] > 0:

for i in range(bboxes.shape[0]):

encoded_results.append({

'confidence': float(scores[i]),

'label': classes[labels[i]]['name'],

'points': bboxes[i][:4].tolist(),

'type': 'rectangle'

})

return context.Response(

body=json.dumps(encoded_results),

headers={},

content_type='application/json',

status_code=200

)

2. function.yaml

base dockerfile

$ pwd ~/my/git/mmdetection/docker $ docker build -t base.mmdet .

base docker 이미지를 기반으로 새로운 이미지 생성

metadata:

name: mmdet-faster-rcnn-x

namespace: cvat

annotations:

name: faster-rcnn-x

type: detector

framework: pytorch

spec: |

[

{ "id": 1, "name": "person" },

{ "id": 2, "name": "bicycle" },

{ "id": 3, "name": "car" },

{ "id": 4, "name": "motorcycle" },

{ "id": 5, "name": "airplane" },

{ "id": 6, "name": "bus" },

{ "id": 7, "name": "train" },

{ "id": 8, "name": "truck" },

{ "id": 9, "name": "boat" },

{ "id":10, "name": "traffic_light" },

{ "id":11, "name": "fire_hydrant" },

{ "id":13, "name": "stop_sign" },

{ "id":14, "name": "parking_meter" },

{ "id":15, "name": "bench" },

{ "id":16, "name": "bird" },

{ "id":17, "name": "cat" },

{ "id":18, "name": "dog" },

{ "id":19, "name": "horse" },

{ "id":20, "name": "sheep" },

{ "id":21, "name": "cow" },

{ "id":22, "name": "elephant" },

{ "id":23, "name": "bear" },

{ "id":24, "name": "zebra" },

{ "id":25, "name": "giraffe" },

{ "id":27, "name": "backpack" },

{ "id":28, "name": "umbrella" },

{ "id":31, "name": "handbag" },

{ "id":32, "name": "tie" },

{ "id":33, "name": "suitcase" },

{ "id":34, "name": "frisbee" },

{ "id":35, "name": "skis" },

{ "id":36, "name": "snowboard" },

{ "id":37, "name": "sports_ball" },

{ "id":38, "name": "kite" },

{ "id":39, "name": "baseball_bat" },

{ "id":40, "name": "baseball_glove" },

{ "id":41, "name": "skateboard" },

{ "id":42, "name": "surfboard" },

{ "id":43, "name": "tennis_racket" },

{ "id":44, "name": "bottle" },

{ "id":46, "name": "wine_glass" },

{ "id":47, "name": "cup" },

{ "id":48, "name": "fork" },

{ "id":49, "name": "knife" },

{ "id":50, "name": "spoon" },

{ "id":51, "name": "bowl" },

{ "id":52, "name": "banana" },

{ "id":53, "name": "apple" },

{ "id":54, "name": "sandwich" },

{ "id":55, "name": "orange" },

{ "id":56, "name": "broccoli" },

{ "id":57, "name": "carrot" },

{ "id":58, "name": "hot_dog" },

{ "id":59, "name": "pizza" },

{ "id":60, "name": "donut" },

{ "id":61, "name": "cake" },

{ "id":62, "name": "chair" },

{ "id":63, "name": "couch" },

{ "id":64, "name": "potted_plant" },

{ "id":65, "name": "bed" },

{ "id":67, "name": "dining_table" },

{ "id":70, "name": "toilet" },

{ "id":72, "name": "tv" },

{ "id":73, "name": "laptop" },

{ "id":74, "name": "mouse" },

{ "id":75, "name": "remote" },

{ "id":76, "name": "keyboard" },

{ "id":77, "name": "cell_phone" },

{ "id":78, "name": "microwave" },

{ "id":79, "name": "oven" },

{ "id":80, "name": "toaster" },

{ "id":81, "name": "sink" },

{ "id":83, "name": "refrigerator" },

{ "id":84, "name": "book" },

{ "id":85, "name": "clock" },

{ "id":86, "name": "vase" },

{ "id":87, "name": "scissors" },

{ "id":88, "name": "teddy_bear" },

{ "id":89, "name": "hair_drier" },

{ "id":90, "name": "toothbrush" }

]

spec:

description: faster-rcnn-x

runtime: "python:3.8"

handler: main:handler

eventTimeout: 30s

env:

- name: MMDETECTION_PATH

value: /opt/nuclio/mmdetection

build:

image: cvat/sixx.mm.fast

baseImage: base.mmdet # base.me # competent_dubinsky

directives:

preCopy:

- kind: USER

value: root

- kind: WORKDIR

value: /opt/nuclio

triggers:

myHttpTrigger:

maxWorkers: 2

kind: "http"

workerAvailabilityTimeoutMilliseconds: 10000

attributes:

maxRequestBodySize: 33554432 # 32MB

platform:

attributes:

restartPolicy:

name: always

maximumRetryCount: 3

mountMode: volume

(부가적으로) 외부에서 해당 모델을 통해 접근을 위한 설정

nuclio function의 포트번호와 cvat network를 지정

spec.triggers.myHttpTrigger.attributes에 특정 port를 추가하고, spec.platform.attributes 에 도커 컴포즈를 실행했을 때 생성된 도커 네트워크(cvat_cvat)를 추가.

triggers:

myHttpTrigger:

maxWorkers: 2

kind: 'http'

workerAvailabilityTimeoutMilliseconds: 10000

attributes:

maxRequestBodySize: 33554432

port: 33000 # 추가포트

platform:

attributes:

restartPolicy:

name: always

maximumRetryCount: 3

mountMode: volume

network: cvat_cvat # 추가 도커네트웍

3. Model_handler.py

…

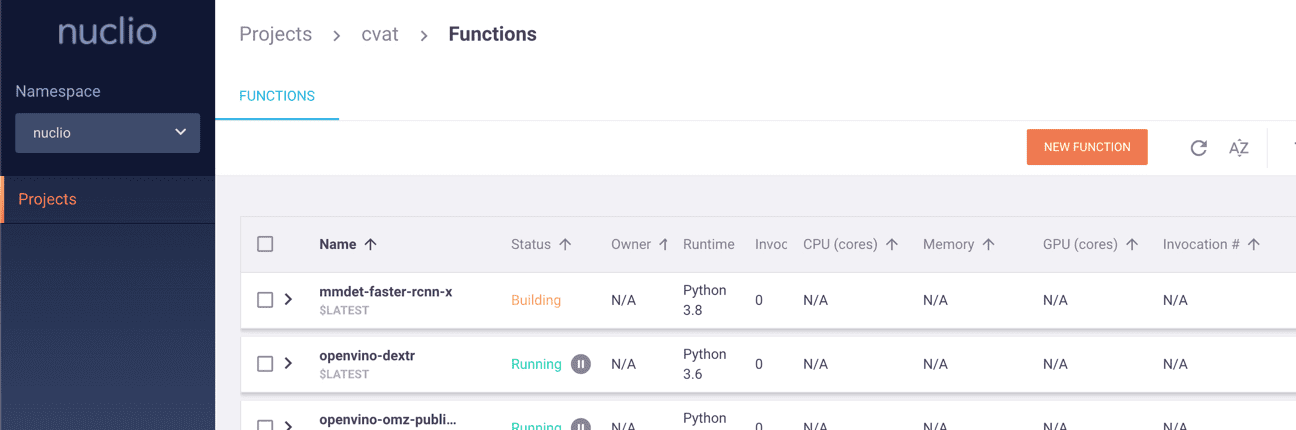

4. Nuclio에 Deploy

방법1)

$ nuctl deploy --project-name cvat \\ --path cvat/serverless/sixx/mmdet/faster_rcnn_x/nuclio/ \\ --volume `pwd`/serverless/common:/opt/nuclio/common \\ --platform local

방법2)

$ serverless/deploy_cpu.sh \\ serverless/pytorch/facebookresearch/detectron2/retinanet/

$ docker ps -a CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 264a2739eeb6 cvat/sixx.mm.fast:latest "conda run -n mmdet3…" 47 seconds ago Up 46 seconds (healthy) 0.0.0.0:49377->8080/tcp nuclio-nuclio-mmdet-faster-rcnn-x e71c29400634 gcr.io/iguazio/alpine:3.15 "/bin/sh -c '/bin/sl…" 5 hours ago Up 5 hours nuclio-local-storage-reader